Given that Echo/Alexa indeed does not (yet?) provide programmers the ability to utilize audio level to determine presence, for now other means are required. As I would prefer this to be automated, I’ve been thinking on this a bit.

How do we determine presence in a Room?

If we have enough HA devices and know the people around us, perhaps we can use the status of those devices.

Here’s an example. If my wife is in the bedroom after dinner, a particular dimming outlet is almost always ON. Additionally, the tv in that room is almost always on. And if it’s after 10pm and those devices have been turned off, it means she’s asleep… but still in that room.

Likewise, if there’s motion in the kitchen/dining room area around 6:30-7pm odds are she’s there. And in two months when the kitchen has been redone and smart switches and outlets and Hue lights are there, I could use their on/off status to say she is likely there. Or I could simply acknowledge that she cooks 70% of the suppers, and so she’s likely to be there during that time span.

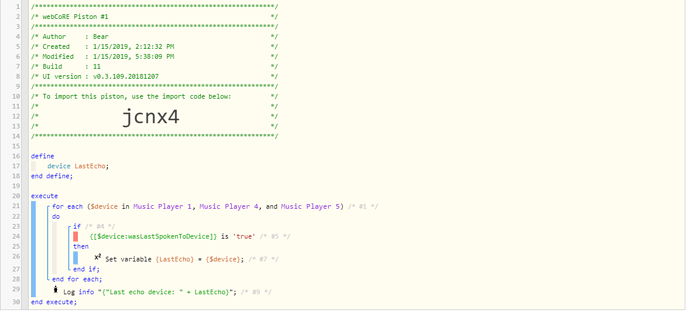

And so any EchoSpeaks messages that are for her should be directed to those areas under those conditions.

Here’s another: in our home office we have a new PC. I’d love to find a way to set it up such that whichever of us is logged on to that machine and using it at that moment, ST could recognize it and register myself or her as present in that room. Likewise I keep my business suits in the closet in that office and get dressed for work there, so any Speaks messages designed for me should play there between 6:30-7a in the morning on weekdays.